How to Score Vendor Proposals Without Losing Your Mind

How to Score Vendor Proposals Without Losing Your Mind

You asked for proposals. The vendors delivered. Now you're staring at a stack of documents—each formatted differently, each claiming to be the best option—and you need to turn this pile into a clear recommendation before the next board meeting.

If you've ever tried to compare six proposals by reading them one after another and then trying to remember what each vendor said about insurance, you know the problem. Human memory isn't designed for this. Your brain starts blending Vendor C's experience with Vendor E's pricing, and by the fifth proposal, you're just trying to get through it.

The solution isn't to read more carefully. It's to score systematically.

Here's a practical framework that works for any project type, requires no procurement experience, and produces a defensible recommendation you can present with confidence.

Step 1: Define Your Criteria Before You Read a Single Proposal

This step should happen when you write the RFP, not when proposals arrive. But if you didn't set criteria upfront, do it now—before you open any proposals.

Why? Because once you start reading proposals, your criteria will unconsciously shift to favor whatever impressed you first. This is anchoring bias, and it's one of the biggest threats to fair evaluation.

The five universal evaluation categories:

Most vendor evaluations can be organized around these five areas, regardless of the service type:

Pricing (typically 25–40% weight)

What will this cost, and does the pricing structure make sense? Look at total cost, not just the headline number. A vendor who prices low but excludes services you need will cost more in the long run.

Relevant Experience (typically 15–25% weight)

Has this vendor done this type of work for organizations like yours? A landscaping company with 20 years of commercial experience but zero HOA experience isn't as relevant as one with 5 years focused on residential communities.

Proposed Approach (typically 15–20% weight)

How will they do the work? Their approach tells you whether they understand your needs and have a realistic plan. Vague approaches ("We will provide excellent service") score low. Specific approaches ("We will assign a dedicated crew of 4 for your property, with a supervisor on-site every Tuesday and Thursday") score high.

Qualifications and Compliance (typically 10–20% weight)

Do they have the required insurance, licenses, certifications, and credentials? This is often pass/fail—either they meet the minimum requirements or they don't.

References and Reputation (typically 10–15% weight)

What do their current and past clients say? Strong references from similar organizations carry the most weight.

Step 2: Assign Weights That Reflect Your Priorities

The weights tell your board (and yourself) what matters most for this specific project.

There's no "correct" weighting—it depends on context. For a routine service contract where multiple vendors are qualified, price might warrant 40%. For a complex construction project where quality is paramount, you might weight experience at 30% and pricing at only 20%.

Here's a sample weighting for a typical service contract:

- Pricing: 30%

- Relevant Experience: 25%

- Proposed Approach: 20%

- Qualifications/Compliance: 15%

- References: 10%

Write these down before you start evaluating. They're your guardrails against subjective drift.

Step 3: Create Your Scoring Scale

Keep it simple. A 1–5 scale works for most evaluations:

5 — Exceptional. Exceeds requirements. Among the strongest responses you could imagine for this category.

4 — Strong. Fully meets requirements with some areas of distinction. No concerns.

3 — Adequate. Meets minimum requirements. Nothing wrong, nothing exceptional.

2 — Below expectations. Partially meets requirements. Has gaps or concerns that would need to be addressed.

1 — Inadequate. Does not meet requirements or didn't address this category.

0 — Non-responsive. Did not provide information for this category.

Resist the urge to create a 1–10 scale. More granularity feels more precise, but it actually makes scoring harder because you spend too long debating whether a response is a "6" or a "7." A 5-point scale forces clearer distinctions.

Step 4: Score One Category at a Time, Not One Vendor at a Time

This is the most important tactical tip in this entire article.

Don't read Vendor A's entire proposal, score it, then move to Vendor B. Instead, read all vendors' responses to one category at a time.

Read all six pricing sections back to back. Score them. Then read all six experience sections. Score them. And so on.

Why? Three reasons.

Consistency. When you evaluate pricing across all vendors in one sitting, you develop a natural sense of what "average" looks like for this field. Vendor C's pricing jumps out as notably high or low because you just read five other pricing structures.

Reduced bias. When you read one vendor's complete proposal, you form an overall impression that colors your scoring of individual categories. Reading category by category keeps each evaluation independent.

Speed. It's faster. You get into a rhythm with each category and can process the information more efficiently.

Step 5: Handle Pricing Systematically

Pricing deserves its own process because it's where most comparison errors occur.

First, normalize the numbers. If vendors priced differently (monthly vs. annual, per-visit vs. per-acre), convert everything to the same unit before comparing. The easiest common unit is total annual cost including all base services.

Second, separate base pricing from optional services. Many proposals include add-on services mixed in with base pricing. Pull these apart so your base-to-base comparison is clean.

Third, watch for exclusions. Vendor A's price might look low until you realize they excluded irrigation management, which Vendors B through E included. A "low" price that doesn't include essential services isn't actually low.

A simple pricing scoring approach:

Calculate the average base price across all proposals. Vendors within 10% of the average score a 3. Vendors more than 10% below average score a 4 (and merit scrutiny—why are they cheaper?). Vendors more than 10% above average score a 2. Adjust up or down based on value justification.

The cheapest vendor doesn't automatically get a 5. Unusually low pricing is often a red flag indicating the vendor misunderstood the scope, plans to cut corners, or will come back with change orders.

Step 6: Call References (Really)

Most people skip this step, or they call references and ask softball questions that produce useless answers.

If you're going to call references, make it count. Three questions that reveal more than a 20-minute conversation:

"Would you hire them again?" The hesitation (or lack thereof) before the answer tells you everything. A confident "Absolutely" is very different from "Well... they were okay."

"What was the biggest challenge during the contract, and how did they handle it?" Every vendor relationship hits bumps. You want to know whether this vendor communicated proactively, solved problems quickly, and took responsibility—or whether they dodged, delayed, and blamed.

"Is there anything you wish you'd known before hiring them?" This open-ended question gives references permission to share concerns they wouldn't volunteer otherwise.

Score references based on the quality and consistency of feedback, not just whether the vendor provided them. Any vendor can find three people to say nice things. Look for specificity, enthusiasm, and alignment with what the vendor claimed in their proposal.

Step 7: Calculate Final Scores

Here's where the math comes together. For each vendor, multiply each category score by the category weight, then sum the results.

Example:

| Category | Weight | Vendor A Score | Weighted |

|---|---|---|---|

| Pricing | 30% | 4 | 1.20 |

| Experience | 25% | 3 | 0.75 |

| Approach | 20% | 4 | 0.80 |

| Qualifications | 15% | 5 | 0.75 |

| References | 10% | 3 | 0.30 |

| Total | 3.80 |

Do this for every vendor. The highest total score is your data-driven recommendation.

But—and this is important—the score is a starting point, not the final answer. If the top two vendors are separated by less than 0.3 points, they're essentially tied, and other factors (local presence, gut feeling after reference calls, board preferences) can reasonably break the tie.

Step 8: Write Your Recommendation

A good recommendation isn't "I picked Vendor A because they got the highest score." It's a narrative that explains the story behind the numbers.

A strong recommendation includes the process you followed (how many vendors solicited, how many responded, what criteria were used), the top 2–3 vendors and what distinguished them, your recommended vendor and 2–3 specific reasons why, any risks or caveats the decision-makers should know about, and a clear next step ("If approved, we will notify the selected vendor and begin contract negotiation by [date]").

This structure anticipates the questions your board will ask and answers them proactively.

When to Use This Framework (And When It's Overkill)

This full framework is appropriate for RFPs with 4+ vendor responses, projects over $25,000 annually, and decisions that will be reviewed by a board or committee.

For simpler situations—2–3 proposals for a small project with no board oversight—you can simplify. Use the same criteria and weights, but score informally (strong/adequate/weak) rather than numerically. The discipline of category-by-category evaluation still adds value even without the math.

How Bid Grid Automates This

If the framework above sounds like exactly the kind of structured work you'd rather not do manually, that's the problem Bid Grid was built to solve.

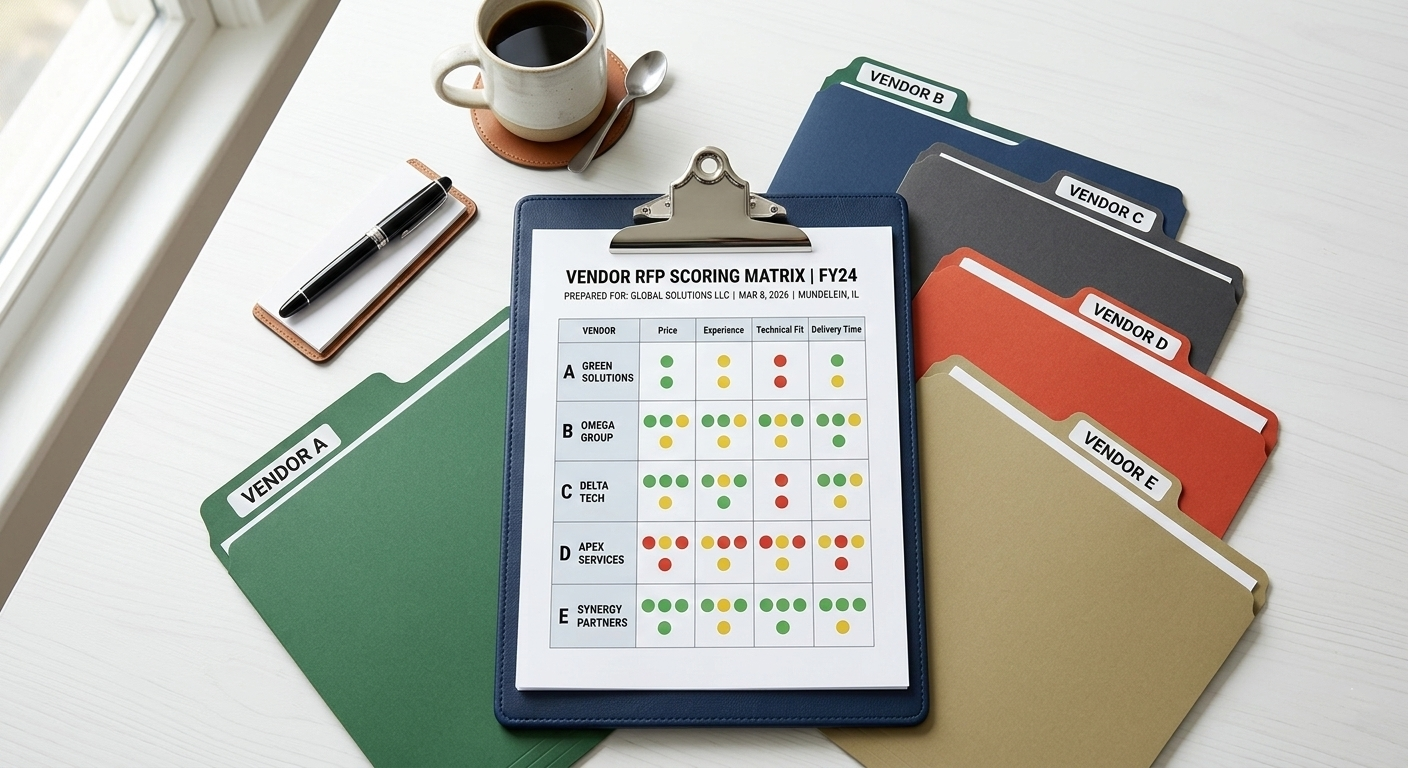

When vendors submit through Bid Grid's portal, their responses are already structured in a comparable format. The AI scores each proposal against your evaluation criteria, generates a color-coded comparison dashboard, and produces a Decision Report you can hand directly to your board.

You still control the criteria, the weights, and the final decision. The software handles the normalization, scoring, and reporting that would otherwise take you 10–15 hours.

Try it free on your next project and see whether automated scoring produces better results than your midnight spreadsheet.

Ready for a scoring system that doesn't require coffee at midnight? Start your first RFP free →

Frequently Asked Questions

How many evaluation criteria should I use?

Four to six categories is the sweet spot. Fewer than four oversimplifies the evaluation. More than six creates analysis paralysis and makes scoring unreliable because the weight per category becomes too small to meaningfully differentiate vendors.

Should price always be the highest-weighted criteria?

No. Price weighting should reflect how important cost is relative to quality for your specific project. For commodity services, price might be 40%. For professional services where vendor capability matters most, price might only be 20%.

What if two vendors are very close in final score?

If the top two vendors are within 0.3 points of each other on a 5-point scale, consider them essentially tied. Request clarification or best-and-final pricing from both, check references more thoroughly, or use qualitative factors to make the final decision.

Should I share scores with vendors who weren't selected?

This depends on your organization's policy and local requirements. Government entities are often required to share scores. Private organizations typically aren't, but offering a brief debrief to non-selected vendors is professional and helps maintain good vendor relationships for future solicitations.